Intelligence saturation

AI is neither bringing the singularity nor is it a nothingburger ; making things requires intelligence and physical inputs

Here’s a thought experiment from my day job as a neuroscientist.

Imagine you’re trying to bat in baseball. Your brain does some genuinely impressive computation predicting trajectories, coordinating dozens of muscles, adjusting for wind and puts all this together using Bayesian algorithms (fun example from professional baseball here). But here’s the thing: making your brain infinitely smarter would not allow you to catch all balls - some are too far, some are too fast, and your muscles don’t quite work like those of professional baseball players. At some point, regardless the intelligence inputs you hit physical limits. Your arm only moves so fast. Your muscles contract at fixed rates. Even while you can somewhat predict what the pitcher will do, there are limits. No amount of genius overcomes the physics of your body.

This, in a nutshell, is intelligence saturation. For a given task, more intelligence helps. But it helps less and less as you add more intelligence. And I think it’s the key concept missing from most debates about AI and the future of work.

The Great AI Disagreement

On one side, we have AI researchers who see exponential curves everywhere: compute doubling, costs halving every six months and conclude we’re headed for economic singularity. The AI folks see that “intelligence” is scaling at unbelievable rates . Token prices for LLMs halve roughly every six months. That’s insane. I co-direct CIFAR LMB so I see that side of the discussion a lot.

On the other side, economists look at 200 years of steady growth despite countless “revolutionary” technologies and shrug: AI is just another general-purpose technology, nothing special. My wife Ioana Marinescu (who happens to be one of the sharpest labor economists I know, yes, I’m biased, but I’m also right) is in the NBER and hears a fair bit of that take

.

So, we got together and basically spent a year thinking and talking about why we have these distinct takes and worked to produce a credible overarching frame. The result is this paper on Intelligence Saturation and the future of work

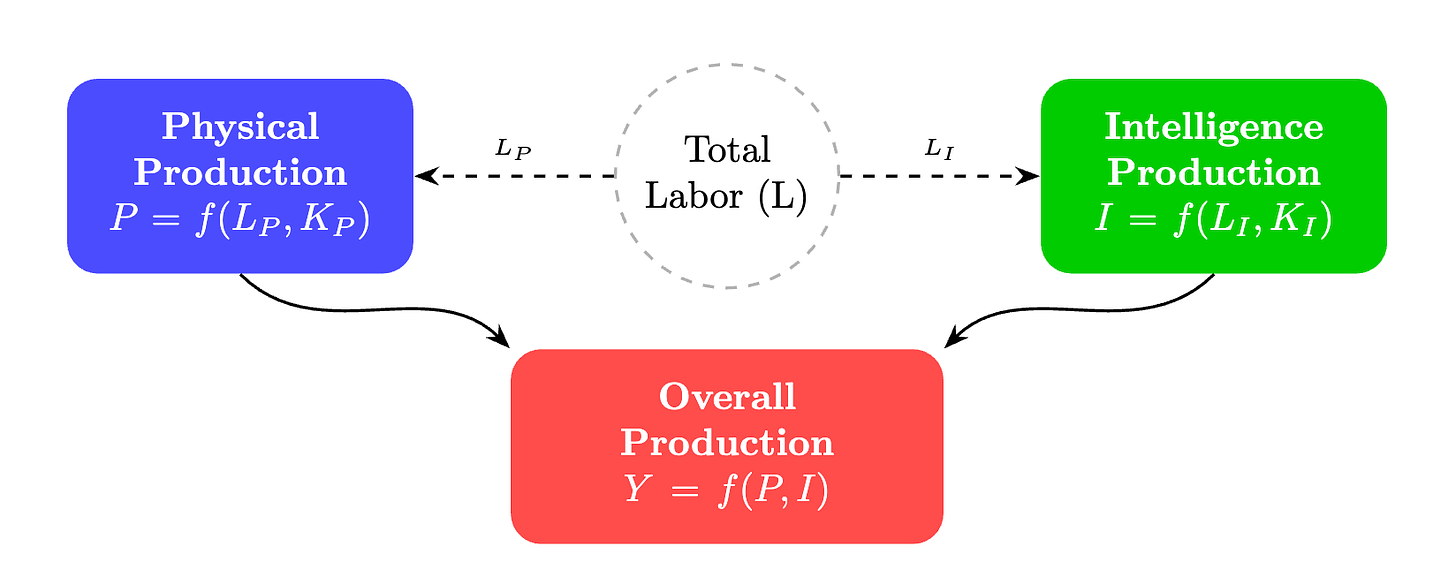

Physical Meets Intelligence

Economists traditionally divide the economy into two complementary sectors, capital and labor. We can replace capital with labor and vice versa, but that replacement is difficult, the more we replace the harder to replace more. In our paper we argue that it is crucial to also divide the economy into the intelligence parts (fast scaling on the artificial side) and the physical parts (slow scaling on the physical side).

The intelligence sector comprises things that can be done virtually, remotely, purely through information processing. And the physical sector comprises things that require bodies, presence, attention, manipulation of the actual world.

We believe that AI may be eating the pure intelligence sector alive. But here’s the catch: intelligence and physical are complements, not substitutes. You need both.

Think about education. AI can generate perfect lesson plans. But students still benefit enormously from a teacher in the room, the physical presence, the classroom management, the hands-on activities. COVID taught us this the hard way. Districts with remote learning saw significantly worse outcomes.

Or manufacturing. Smarter controllers can optimize production lines beautifully. But you still need the robots, the assembly equipment, the physical infrastructure. And those aren’t doubling in capability every six months.

Or healthcare. AI diagnostics are impressive. But someone still has to examine the patient, perform the surgery, administer the treatment. And to develop new cures you don’t just need to read the literature, you need to run randomized controlled tasks on human subjects, very much a slow-growing resource.

Intelligence saturates because physical inputs don’t scale the same way. You can add infinite intelligence, but if physical capacity is fixed, returns eventually plateau.

What This Means for Wages

Here’s where it gets interesting (and concerning).

In our model, as AI automates intelligence tasks, workers shift toward physical jobs. This creates two opposing forces on wages:

Scale effect: More AI boosts intelligence output, which helps physical workers too (complements, remember?)

Reallocation effect: More workers crowding into physical jobs pushes wages down

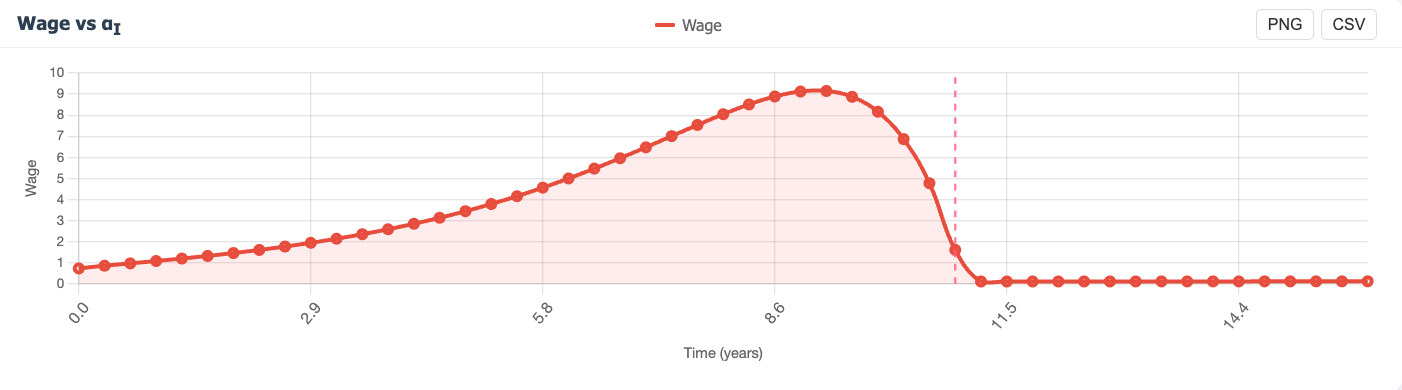

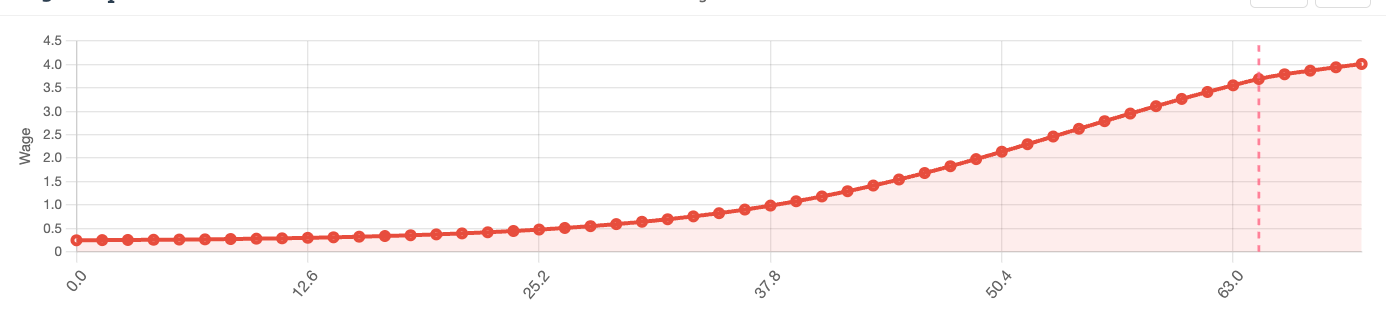

Early in automation, the scale effect dominates. Wages rise. Later, as most intelligence tasks are automated and workers pile into the physical sector, the reallocation effect wins. Wages fall. The result? Depending on parameters there is often a hump-shaped trajectory. Wages up, then wages down. This isn’t a prediction, it depends on parameters we don’t know precisely. But it’s a possibility that early wage gains from AI are positive and long-term effects are negative; we shouldn’t become complacent.

The Key Parameter Nobody’s Measuring

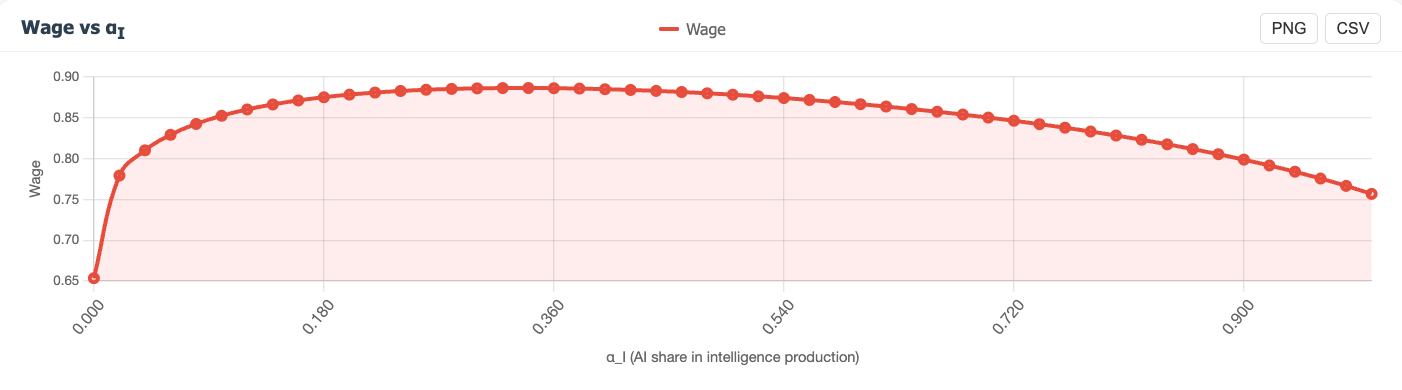

Everything hinges on one question: how substitutable are physical and intelligence outputs?

If you can swap virtual services for in-person ones easily, high levels of automation hits wages hard. If they’re strong complements; if people genuinely value physical goods and in-person services; workers in the physical sector are protected.

This is measurable. We should be measuring it.

Policy Implications

Three quick takes:

Slow automation, invest in physical capital. If we’re racing toward the downward slope of that wage hump, buying time for physical sector investment could help.

Protect physical sector complementarity. Policies that make virtual services perfect substitutes for in-person ones might boost output but hurt wages during the transition.

Watch the physical employment share. In our model, wages can’t fall from automation unless the share of workers in physical jobs rises/ the share of workers in intelligence jobs falls. That’s your canary.

The Bottom Line

The singularity narrative assumes unbounded returns to intelligence. But you can’t build a car with pure computation. You can’t cook a meal with algorithms. You can’t construct a building with cleverness alone.

The physical world imposes constraints that intelligence can only optimize against, not eliminate.

That’s not a reason for complacency—the transition could still be rough. But it’s a reason to think the AI transformation will be significant yet bounded, not infinite.

We built an interactive tool if you want to play with the parameters yourself. You can see how for different parameter settings the results are, indeed, singularity or nothingburger. But for the parameters we consider most realistic, the results should be somewhere in between. Intelligence is powerful. But it saturates.

Parts of this were used as the basis of the following article in Transformer.

Tool advertisement

I used the development of this tool to test planyourscience.com, the app that tells you that your scientific ideas are not yet great.

Interesting post. It seems to me that the main crux of the argument is on robots, so I would have liked to see more attention there. If robots (writ broadly, including humanoids, drones, AI-designed factory automation, etc.) are currently bottlenecked mainly by intelligence rather than hardware -- which some evidence suggests -- then sufficiently "intelligent" AI should also accelerate robotics to close to the same degree, in which case all wages get low without the relative returns for physical labor.

(Also, as another wrinkle, it doesn't really consider potentially transformative neurotechnologies, like whole brain emulation, which would also be likely to happen very fast if intelligence is fully automated.)

I'm personally skeptical of near-term automation of intelligence.

There's a huge factor missing here, which is the impact of falling prices! If AI takes over an industry, it's because it can do it cheaper, and if it can do it cheaper, the prices of its products and services will go down. So we shouldn't be looking at the wage curve, but rather the purchasing power curve, if what we're concerned with is human well-being.